BIG DATA

Data Engineering & Big Data Platforms

Large, fast-moving datasets are only an asset if your architecture can hold them. We design data platforms that turn high-volume, high-variety data into something your business can actually query, trust, and act on — and that an AI layer can consume cleanly when you are ready for one.

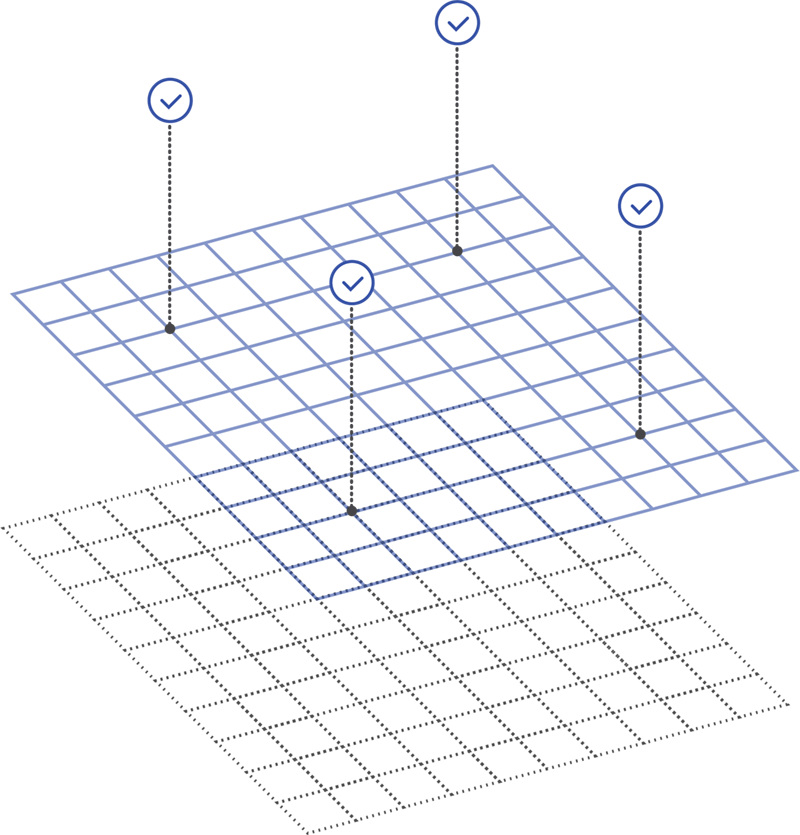

THE PLATFORM PRINCIPLE

Reliable, accessible, AI-query-ready from day one. We build for the realities of scale — throughput, cost, and data governance — so your information stays usable as it grows, instead of becoming a data swamp that outpaces your ability to use it.

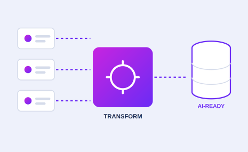

Engineering the Full Pipeline

We architect the full pipeline: ingestion, storage, transformation, and access. That means clean data models, event-driven patterns, and API surfaces designed so analytics and machine-learning workloads are first-class consumers, not afterthoughts..

We build for the realities of scale, throughput, cost, and data governance, rather than treating big data as a buzzword. The result is a platform where your information is reliable, accessible, and AI-query-ready from the start, instead of a data swamp that grows faster than your ability to use it.